Home

Alexander Taylor

-

London-based artist & creative technologist.

Personal projects collated here - for a portfolio of professional work please get in touch.

Filter projects by:

✉ a@alexandertaylor.org

2% Of Spaces You Could Possibly Encounter

AI/MLInstallationsPhysical Computing2019Games

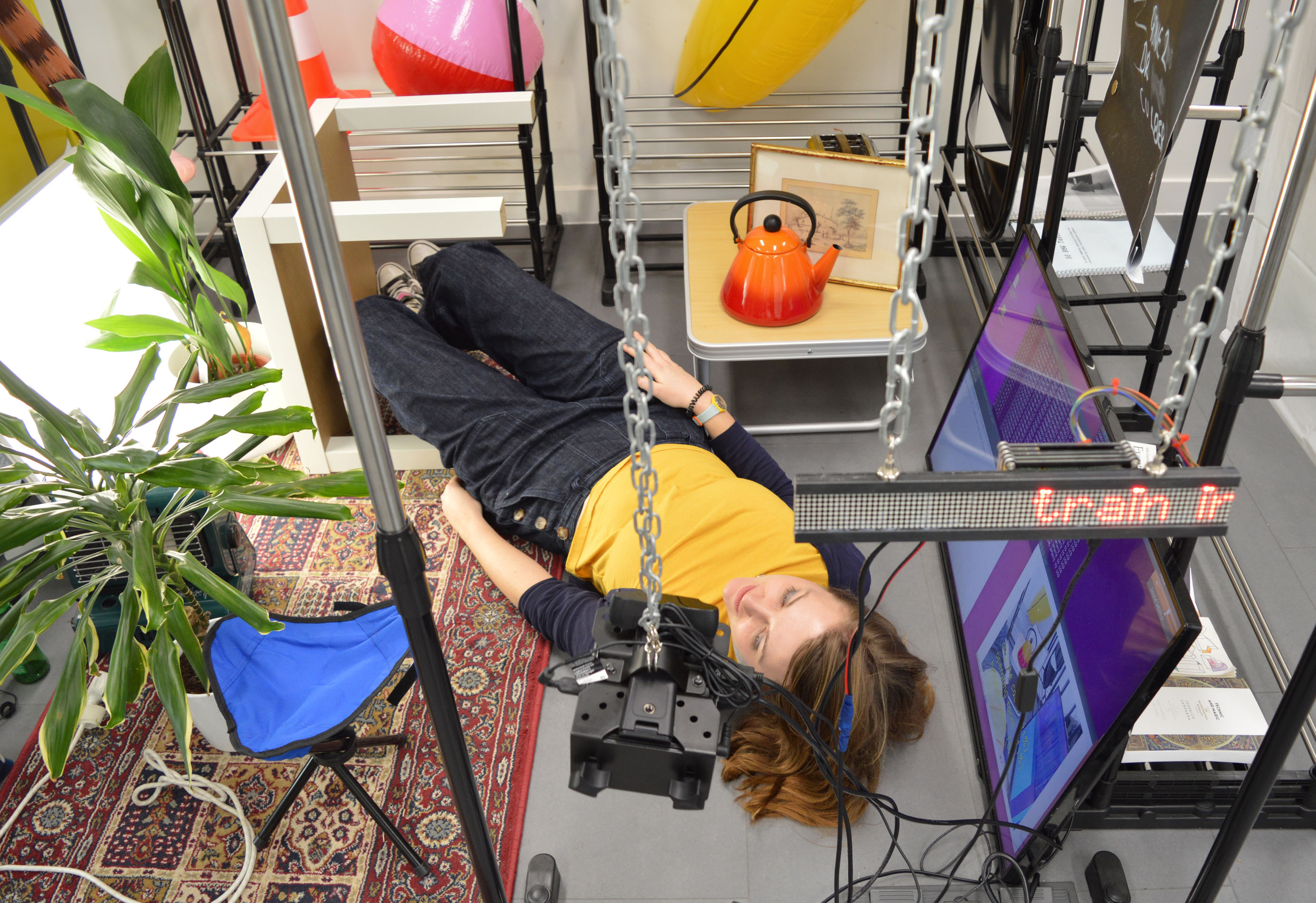

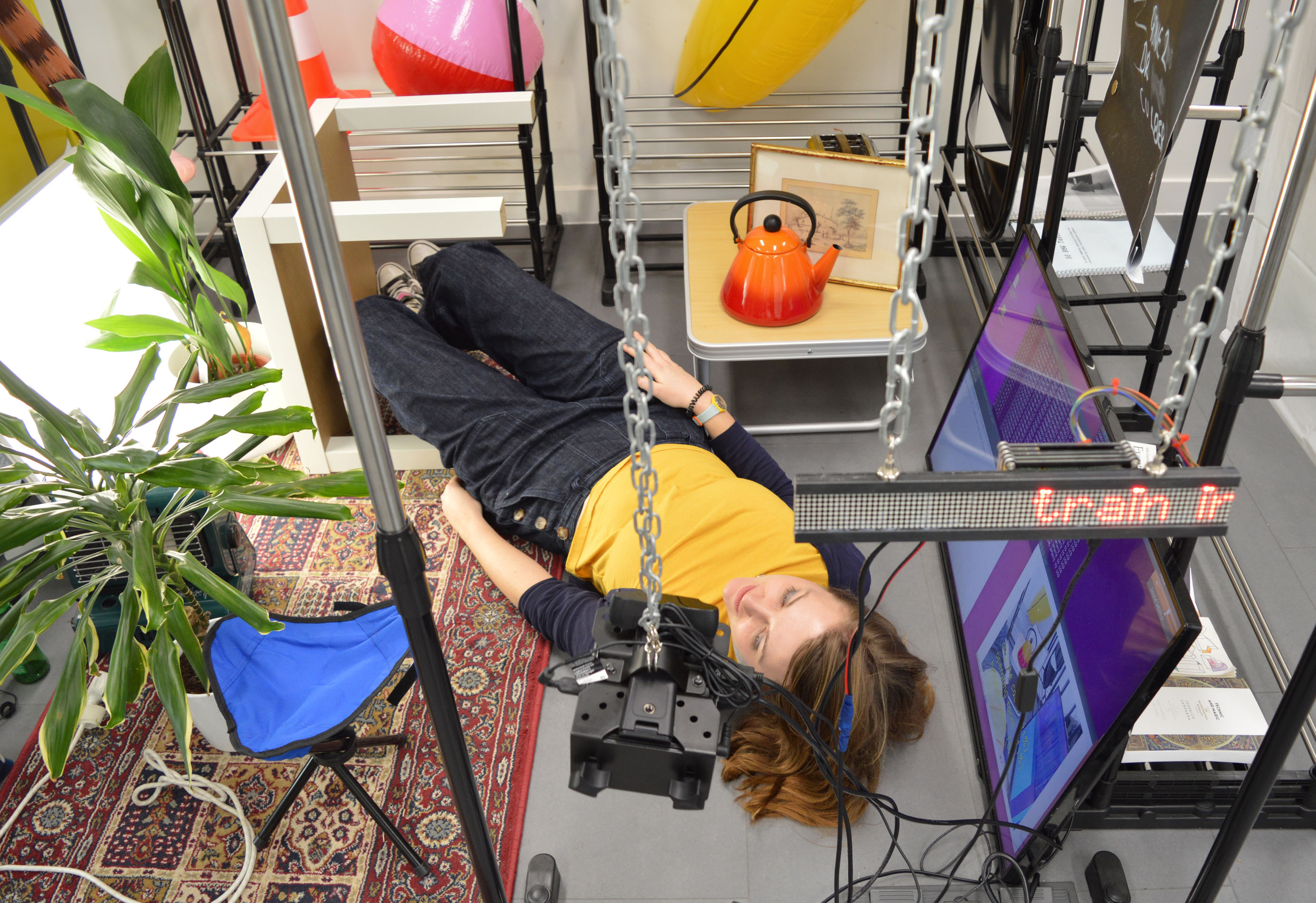

'2% Of Spaces You Could Possibly Encounter' uses machine learning to classify a scene in real-time as visitors modify the layout of the space. Using MIT's Places database of 10 million images, a live feed is taken from the camera, ran through a neural network trained on the dataset, then filed into one of 365 spacial categories, ranging from amusement parks to operating theatres

Above header photo by Daisy Imogen Buckle.

Responding to the claim that the Places dataset can account for 98% of spaces a user could possibly encounter, visitors are encouraged to interact with the camera feed in order to reveal the quirks, blind-spots and biases that can be found within the dataset. Results of the current classification are announced via a text-to-speech system and can also be seen on a computer monitor contained within the space.

This project draws from ongoing research into datasets; who compiles them, how they are compiled, and what they are (and can potentially be) used for.